Our Key Projects

Transforming Industries with AI

SnapDecor

To create stunningly realistic room transformations, we trained our AI model on a vast, diverse dataset of furniture styles, décor, textures, and materials. The AI intelligently suggests design elements, arranging furniture and décor to complement existing room layouts while accounting for unique factors such as lighting, color harmony, and spatial perspective.

Our solution provides users with interactive functionality to visualize different design options, swap furniture and décor in real-time, and make custom adjustments. The AI handles intricate details like shadow casting, natural light adjustments, and perspective alignment, allowing users to experience a true-to-life preview of their redesigned space.

This robust functionality enables users to seamlessly experiment with design concepts, ensuring that each virtual transformation feels as realistic as possible and inspires confidence in their design choices.

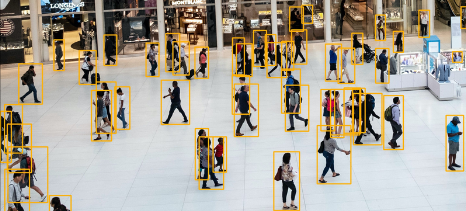

SAFEZONE

We implemented advanced object detection and tracking algorithms to enhance the accuracy of identifying suspicious activities in real-time. A key challenge was minimizing false positives, which we addressed through rigorous testing, fine-tuning of models, and iterative enhancements. This approach ensured a robust system capable of delivering reliable results even in complex environments.

TrashTalker

We tackled the complex challenge of differentiating between visually similar materials in waste management. By training our AI model on a diverse dataset of waste items, we developed a system capable of accurately identifying and sorting recyclables, compost, and landfill waste. This solution significantly improves sorting precision, reducing contamination and promoting efficient waste processing.

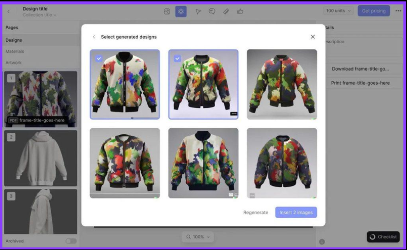

Fashionista360

We designed and implemented a custom AI model to deliver precise garment fitting directly onto users’ photos, creating an immersive virtual try-on experience. The system effectively addressed challenges related to diverse body shapes, sizes, and clothing styles by leveraging advanced computer vision and deep learning techniques. This innovation ensures realistic and accurate virtual fittings, enhancing user confidence and engagement in online fashion shopping.

DeepChef

We tackled the challenge of interpreting ingredient images captured under varying quality and lighting conditions. Through iterative refinements and advanced image recognition techniques, our AI model achieved high accuracy in identifying ingredients. This enabled the generation of creative, tailored recipes based on the input, transforming everyday cooking into an innovative and personalized experience.

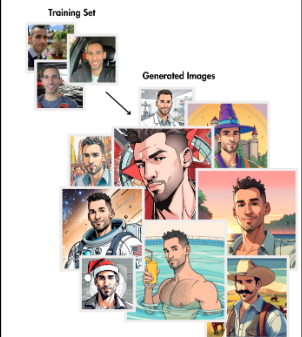

FaceSwapArt

We developed a cutting-edge AI system for seamless face merging, capable of adapting to diverse artistic styles and lighting conditions. By fine-tuning the model for precision and realism, we ensured users could effortlessly integrate their faces into any artistic masterpiece, delivering a unique and engaging creative experience.

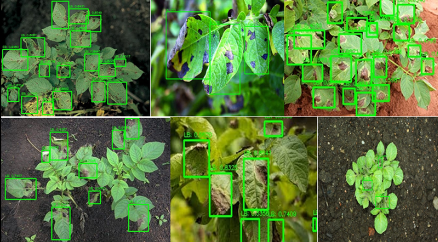

PlantWhisperer

Personalized education experiences with real-time tracking, predicDiagnosing plant issues from images posed a challenge due to the vast diversity of species and conditions. To address this, we extensively trained our AI model on a comprehensive dataset covering various plant types and health scenarios. Through meticulous fine-tuning, our system now delivers highly accurate diagnoses and personalized care recommendations, empowering users to effectively nurture their plants.tive analytics, and interactive dashboards for educators and students.

GraffitiGenius

We faced the challenge of preserving the unique and vibrant essence of graffiti art while seamlessly applying it to user-submitted photos. By collaborating closely with graffiti artists, we fine-tuned our AI model to ensure the output captured the authenticity, creativity, and dynamic style of true street art. This fusion of technology and artistry allows users to transform their images into striking, graffiti-inspired masterpieces.

ResearCrowdMood

Analyzing emotions in crowded environments posed significant challenges due to occlusions, varying distances, and overlapping individuals. To address these complexities, we developed a robust AI model capable of efficiently processing intricate scenes. The system delivers precise sentiment analysis by leveraging advanced techniques in computer vision and deep learning, ensuring accurate insights even in dynamic, high-density settings.

PetMatch

Integrating shelter databases and optimizing our AI to account for the unique characteristics of diverse animal breeds was key to the success of this project. By fine- tuning the system to understand both user preferences and pet traits, we developed a solution that seamlessly connects users with adoptable pets that match their ideal criteria, enhancing the pet adoption experience.

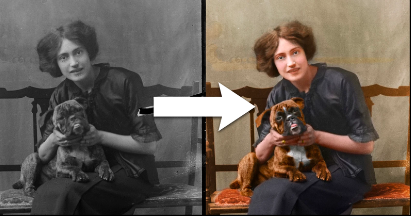

PicRestorer

We addressed the complex challenge of restoring severely damaged photos by training our AI on a diverse dataset of deteriorated images. The result is a powerful tool that can intelligently repair and revitalize photos, allowing users to recover and cherish precious memories with remarkable detail and clarity.

SpotTheDifference

Designing an AI that generates visually appealing yet challenging differences involved striking a delicate balance between creativity and difficulty. Through iterative refinement and testing, we enhanced the AI’s ability to produce engaging and satisfying experiences, ensuring each puzzle offers the perfect level of challenge while maintaining visual appeal.

VirtualTourGuide

We overcame the challenge of seamlessly integrating geolocation data with rich visual content by developing a dynamic AI system that intelligently adapts to users’ interests and travel preferences. This innovative solution personalizes travel experiences, providing tailored recommendations and engaging visual content based on real-time location data.

SketchMe

Our challenge was to preserve the distinctiveness of each photo while applying a consistent and artistic sketch style. By training our AI on a wide range of artistic techniques and fine- tuning its ability to adapt to individual images, we successfully created a tool that transforms photos into unique, stylized sketches while maintaining their original character.

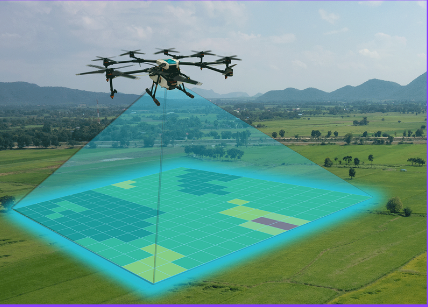

AerialAnalyzer

We tackled the challenges of processing high-resolution drone imagery and extracting meaningful insights by developing a robust AI system capable of analyzing complex infrastructure and environmental data. This solution enables accurate, real-time assessments, providing actionable intelligence for informed decision-making in areas such as construction, maintenance, and environmental monitoring.

SignLanguageTranslator

To achieve precise sign language translations, we curated and annotated a comprehensive dataset covering multiple sign languages. We overcame the challenge of varying dialects and signing styles by rigorously training our AI model, ensuring it can accurately interpret diverse gestures and provide reliable translations across different sign language communities.

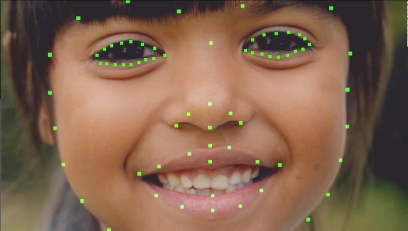

FaceGen

Creating realistic 3D face models from a single photo posed challenges related to lighting conditions and varying facial expressions. To overcome this, we developed an advanced AI model capable of generating accurate, detailed 3D face models that adapt seamlessly to different lighting and expression variations. This solution enables the creation of versatile models suitable for a wide range of applications, from virtual environments to identity verification.

ShopTheLook

We addressed the challenge of identifying and matching clothing items from social media images by developing an AI system that can efficiently recognize and find visually similar items across online stores. Through advanced image recognition and machine learning techniques, our AI provides users with a seamless shopping experience, allowing them to easily discover and purchase items they see on social media.

LicensePlateHunter

We faced the challenge of accurately recognizing license plates under diverse conditions while ensuring strict data privacy. To address this, we developed a secure and efficient AI solution that provides reliable, real-time results, empowering law enforcement and investigators with the tools needed for effective monitoring and analysis without compromising sensitive information.

RetailVision

We tackled the challenge of creating a personalized, interactive shopping experience within smart fitting rooms. By combining computer vision with advanced recommendation algorithms, we developed an AI system that enables users to virtually try on outfits and receive customized suggestions based on their preferences, ensuring a seamless and engaging shopping experience

Market AI – Sales Prediction

Technologies: Python, Keras Development Period: 2 months

Goal: To develop a machine learning model for analyzing and predicting product sales based on historical sales data from similar products.

Result: We successfully built a predictive model that forecasts product sales with an accuracy of approximately 87%, enabling more informed decision-making for inventory and marketing strategies.

Technologies Used: Python for data processing and Keras for building and training the model.

Darktrace – Network Traffic Anomaly Detection

Technologies: Python Development Period: 4 months

Goal:To analyze internet traffic within corporate networks to detect abnormal activities and potential security threats in real time.

Result:We developed a robust system that continuously monitors and analyzes corporate network traffic, promptly identifying and alerting users about any suspicious or anomalous activity, enhancing security and risk management.

Technologies Used:Python for network traffic analysis and anomaly detection.

Text Replacement on Images

Technologies: Python, PyTorch Development Period: 1.5 months

Goal:To develop a machine learning solution capable of seamlessly replacing text on images, ensuring that the new text matches the original in style, font size, and alignment.

Result:We created a tool that accurately replaces text on any surface in images, preserving the original look and feel, including font, size, and indentation, making the change indistinguishable from the original content.

Technologies Used:Python and PyTorch for image processing and text replacement model development.

Robotic Hand – Game Training with Machine Learning

Technologies: Python Development Period: 3 months

Goal:To train a robotic hand controller to autonomously play various mini-games using machine learning techniques.

Result:We developed a simulation environment and a detailed robotic hand model, training multiple machine learning models to master different mini-games. The project demonstrated the robotic hand’s ability to adapt and perform tasks in diverse gaming scenarios, showcasing its precision and learning capabilities.

Technologies Used:Python for simulation development and machine learning model training.

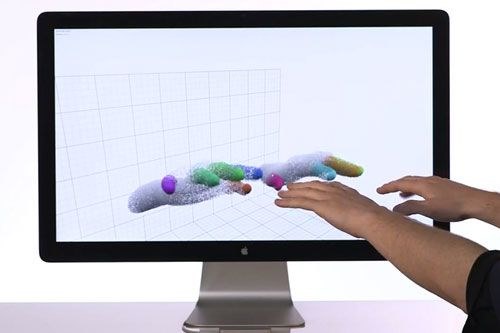

SmuDay-2 – Interactive Stand with AI -Powered Motion Control

Technologies: Python, Unity, React.js, Node.js Development Period: 6 months

Goal:To create an interactive stand controlled by human movements, enabling users to engage dynamically and access information tailored to their needs.

Result:We developed an advanced application that leverages input from four cameras to track human movements, allowing for seamless interaction. The system adapts to user gestures and delivers information in real time, providing an engaging and personalized experience.

Key Features:

- Motion Tracking: Integrated AI-powered motion recognition for precise control.

- Dynamic Interaction: Delivers information based on user gestures and preferences.

- Technological Integration: Combines Unity for visuals, Python for AI models, and React.js with Node.js for a responsive and scalable architecture.

Felix – AI-Powered NFT Generation Platform

Technologies: Python, Node.js, React, Solidity

Development Period: 2 months

Goal:To develop a web platform that enables users to create and mint NFTs using AI-driven neural networks, transforming user inputs into unique digital assets.

Result:We successfully built an intuitive platform where users can generate NFTs using advanced neural networks. The system seamlessly processes user inputs, creates unique artwork, and facilitates minting on the blockchain, making NFT creation accessible and innovative.

Key Features:

- AI Integration: Leverages neural networks to generate artistic NFTs based on user- provided prompts.

- Blockchain Technology: Ensures secure minting and ownership of NFTs using Solidity smart contracts.

- User-Friendly Interface: Developed with React for a seamless and engaging user experience.

- Scalable Backend: Built with Node.js for efficient handling of user requests and data processing.

Aircraft Tracking – AI-Powered Static Camera Solution

Technologies: Python, OpenCV

Development Period: 1 month

Goal:To develop a tool capable of accurately tracking aircraft movements using a static camera and machine learning algorithms.

Result:We created an advanced application that leverages AI and computer vision to track aircraft with 96% accuracy. This solution provides reliable and efficient monitoring, even under varying environmental conditions, ensuring precise identification and tracking of aircraft.

Key Features:

- High Accuracy: Achieved 96% tracking accuracy through robust machine learning models.

- Computer Vision Integration: Utilized OpenCV for real-time image processing and analysis.

- Scalable Design: Designed for deployment in various settings, including airports and air traffic monitoring stations.

Lemmatization – AI-Powered Text Parsing and Video Translation for Accessibility

Technologies: Python

Development Period: 1 month

Goal:To develop a tool that parses text into parts of speech and sentence components, enabling the generation of videos with translations specifically designed for the deaf and mute community.

Result:We successfully created a cutting-edge solution that analyzes sentences based on meaning and context. This tool generates accurate and meaningful video translations, making text content more accessible to individuals with hearing and speech impairments.

Key Features:

- Semantic Parsing: Breaks down sentences into grammatical components and interprets their significance.

- Accessibility Focus: Generates videos with sign language translations, enhancing communication for the deaf and mute.

- Efficient Processing: Delivers seamless and real-time text-to-video transformation for accessibility applications.

AI Drawing Recognition – Automating Floor Plan Analysis

Technologies: Python, OpenCV

Development Period: 1.5 months

Goal:To develop an AI-powered mechanism capable of accurately identifying walls, gridlines, and matchlines in floor plan images.

Result:We successfully created a robust solution that detects walls, gridlines, and matchlines in architectural drawings with 90% accuracy. This tool significantly improves efficiency in analyzing and interpreting floor plan designs for architects and engineers.

Key Features:

- High Accuracy: Achieved 90% precision in identifying structural elements within floor plans.

- Automated Processing: Streamlined the analysis of complex architectural drawings, saving time and reducing manual effort.

- Computer Vision Integration: Used OpenCV to process and extract visual patterns from floor plan images effectively.

AI Speech Replacement – Seamless Audio and Articulation Transformation

Technologies: Python, PyTorch

Development Period: 1.5 months

Goal:To develop an advanced AI model capable of seamlessly replacing human speech in videos, synchronizing the audio with accurate lip movements and facial articulation.

Result:We built a sophisticated tool that imperceptibly replaces both the audio track and the speaker’s lip movements in videos. This technology ensures natural and realistic speech synchronization, making the modifications virtually undetectable.

Key Features:

- Lip-Sync Precision: Achieved highly accurate alignment of lip movements with replaced audio.

- Realistic Video Output: Delivered smooth and natural articulation that blends seamlessly into the original video.

- Versatile Applications: Ideal for dubbing, content editing, and video localization without compromising authenticity.

Maurizio – 360-Degree Image Creation with Fisheye Lens Integration

Technologies: Machine Learning, iOS Development (Swift)

Development Period: 3 months

Goal:To develop an iOS application that enables users to combine two images captured with an attached fisheye lens, creating a seamless 360-degree image experience.

Result:We successfully built an intuitive application that allows users to merge two fisheye lens images into a single, panoramic 360-degree view. The app enhances the user experience by enabling easy creation, viewing, and sharing of immersive 360-degree images directly from an iPhone.

Key Features:

- 360-Degree Image Creation: Combines fisheye lens images into panoramic views.

- Seamless Integration: Utilizes advanced algorithms to stitch images smoothly without visible seams.

- User-Friendly Interface: Intuitive design for easy capturing, combining, and viewing of 360-degree images.

- Efficient Processing: Optimized for fast image processing on iPhone, ensuring smooth performance.

AI Damage Detection for Car Sharing Vehicles

echnologies: Machine Learning, Android Development (Kotlin, Java), ARCore, OpenCV

Development Period: 5 months

Goal:To develop a mobile application that analyzes damage on car-sharing vehicles, allowing users to scan and document the condition of the car before and after use. The application also includes an integrated system that ensures users perform a thorough inspection by guiding them with augmented reality (AR) prompts.

Result:We successfully created an Android application that enables users to scan and document any damages to car-sharing vehicles. The app uses AR guidance to ensure users follow the correct procedure for damage reporting, confirming the completion of the scan. Machine learning models analyze the scanned images to detect any new damages, and users are notified of discrepancies between pre- and post-use conditions.

Key Features:

- Damage Detection: Machine learning algorithms analyze images for any visible damage on vehicles.

- AR-Assisted Scanning: Augmented reality tips guide users through the damage inspection process to ensure accurate image capture.

- User Verification: Integrated system verifies the completeness of the damage scan, ensuring proper documentation.

- Seamless Integration: The app is fully integrated with the car-sharing service, enabling users to easily document car conditions before and after use.

Shop AI Tracking

Technologies: Machine Learning, Android Development (Kotlin, Java), TensorFlow, Python

Development Period: 1 month

Goal:To develop a mobile application that accurately counts the number of people entering and exiting a store, providing valuable foot traffic data for business insights.

Result:We developed an AI-powered application that uses advanced computer vision techniques to track and count the number of people entering and exiting a store in real- time. With an accuracy rate of 93%, the application provides reliable data on customer movement, helping retailers understand foot traffic patterns and improve store management.

Key Features:

- Real-Time People Counting: Uses computer vision and AI to track individuals entering and leaving the store.

- High Accuracy: Achieves 93% accuracy in detecting and counting people, even in busy or crowded environments.

- Data Insights: Provides store owners with valuable analytics on customer behavior and foot traffic.

- Seamless Integration: Fully integrated with Android devices, ensuring easy deployment and usability in any retail environment.

AI Traffic Tracking

Technologies: Machine Learning, Desktop Development (C#), Android Development (Kotlin, Java)

Development Period: 12 months

Goal:To create an application for Windows and Android that analyzes and visualizes all types of network traffic, providing detailed insights into the data packets exchanged by the devices, with the exception of encrypted SSL traffic.

Result:We developed a cross-platform application capable of parsing and displaying network traffic across multiple protocols, including TCP, UDP, QUIC, and WebRTC. The application provides a comprehensive view of all unencrypted packet data, enabling users to monitor and analyze network activity in real time. This tool is particularly useful for network administrators, security analysts, and anyone seeking to understand their device’s communication patterns.

Key Features:

- Protocol Support: Parses and displays traffic for TCP, UDP, QUIC, WebRTC, and more, providing a thorough overview of device communication.

- Real-Time Traffic Monitoring: Allows users to monitor live network traffic, including detailed packet data.

- Cross-Platform Compatibility: Designed to work seamlessly on both Windows and Android platforms.

- SSL Exclusion: While encrypted SSL traffic is excluded, the application covers all other unencrypted data types for detailed analysis.

- User-Friendly Interface: Intuitive interface for both desktop and mobile platforms, making network monitoring accessible to both experts and casual users.

AI Poker Assistant

Technologies: Machine Learning, Desktop Development (C#, WPF), Tesseract, OpenCV, WinAPI

Development Period: 6 months

Goal:To create an intelligent poker assistant application that helps players by identifying weaker opponents (“fish”) and automating various tasks to enhance the gaming experience.

Result:We developed a Windows-based application that intelligently parses tables from several popular online poker rooms to identify “fish” — players who are more likely to lose money due to poor decision-making. The application automatically seats players at optimal tables based on the identified opportunities and performs various other auxiliary functions to aid in strategy, efficiency, and ease of play.

Key Features:

- Opponent Identification: Utilizes machine learning to scan poker tables, identifying weaker players (“fish”) based on patterns and behaviors, offering players a strategic advantage.

- Automatic Table Management: Automates the process of sitting at optimal tables by analyzing the current player pool and identifying profitable opportunities.

- Real-Time Assistance: Assists players with real-time, actionable information to help make better decisions during gameplay.

- Advanced Parsing and Image Recognition: Leverages technologies like Tesseract for optical character recognition (OCR) and OpenCV for image processing to extract data from poker room interfaces.

- Comprehensive User Experience: Built with C# and WPF to offer a smooth, user-friendly interface with integrated functionality for seamless gaming support.

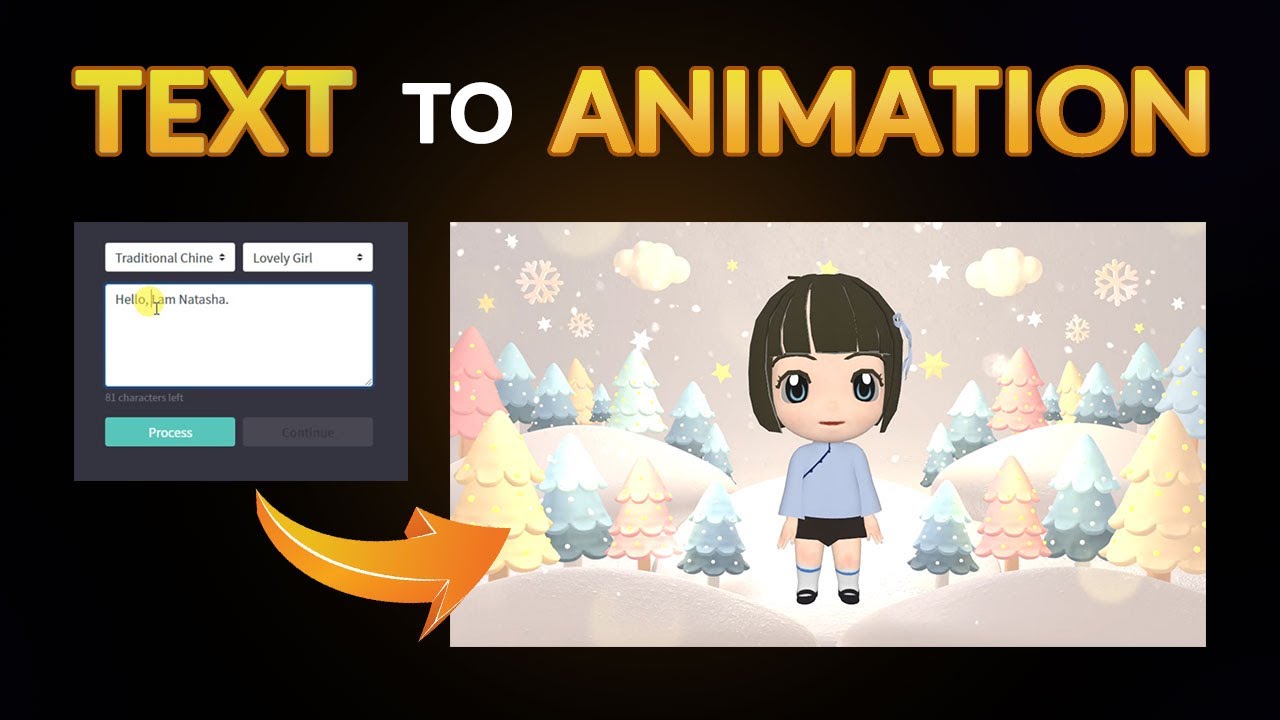

Tivli

Technologies: Machine Learning, Unity3D Development, Web Development, Frontend Development, Backend Development

Development Period: 4 months

Goal:To develop an innovative web-based system that converts user input (text) into fully animated video content, providing a seamless and interactive experience for content creators and users.

Result:We successfully created a web portal that leverages machine learning and Unity3D technology to transform user-provided text into dynamic, animated videos. The system automatically generates animations based on the input text, enabling users to create professional-quality animations without prior animation skills.

Key Features:

- Text-to-Animation Conversion: Users can input text, and the system automatically converts it into high-quality animated videos.

- Customizable Animations: The platform allows for customization of animation styles, character movements, backgrounds, and more to fit the user’s vision.

- Web Application: The platform is accessible through a browser, providing a user-friendly interface for easy access to animation tools.

- Real-Time Processing: The system ensures quick rendering of animations, offering users an efficient, real-time creation experience.

- Interactive Interface: Developed using React JS for seamless frontend interactions and Node JS for robust backend performance, delivering a smooth, responsive experience.

This project empowers individuals and businesses to create engaging animated videos in minutes, without the need for specialized animation software or expertise.

Adoreal

Technologies: Machine Learning, Unity3D, iOS Development

Development Period: 6 months

Goal:To create an iOS application that enables users to visualize potential outcomes of facial plastic surgery by “trying on” different facial modifications in real-time using augmented reality (AR) and machine learning technologies.

Result:We successfully developed an iOS application that integrates advanced AR and machine learning to allow users to see realistic facial transformations before undergoing plastic surgery. By leveraging ARFoundation and Unity, the app simulates the effects of various surgical procedures on the user’s face in real-time, providing a highly interactive and personalized experience.

Key Features:

- Real-time Visualization: Users can see potential facial changes, such as rhinoplasty, chin reshaping, and other procedures, using their own facial features.

- Augmented Reality: AR technology overlays the proposed changes onto the user’s face in live camera feed, offering an immersive and interactive trial experience.

- Personalized Suggestions: The app uses machine learning algorithms to recommend realistic transformations based on the user’s facial structure and preferences.

- User-Friendly Interface: Designed with a sleek and intuitive interface, allowing users to easily navigate through different options and see their face in various scenarios.

This app empowers users to make informed decisions about cosmetic surgery, helping them visualize the potential results and improving the consultation process with surgeons.

Get in touch for a free consultation

and discover how our expertise can bring your ideas to life.

We’ve successfully delivered dozens of AI, ML, Computer Vision, Web, and Mobile solutions across industries — and this portfolio shows just a glimpse of what we can achieve.